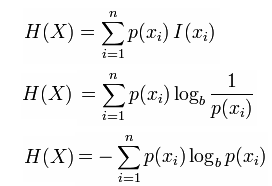

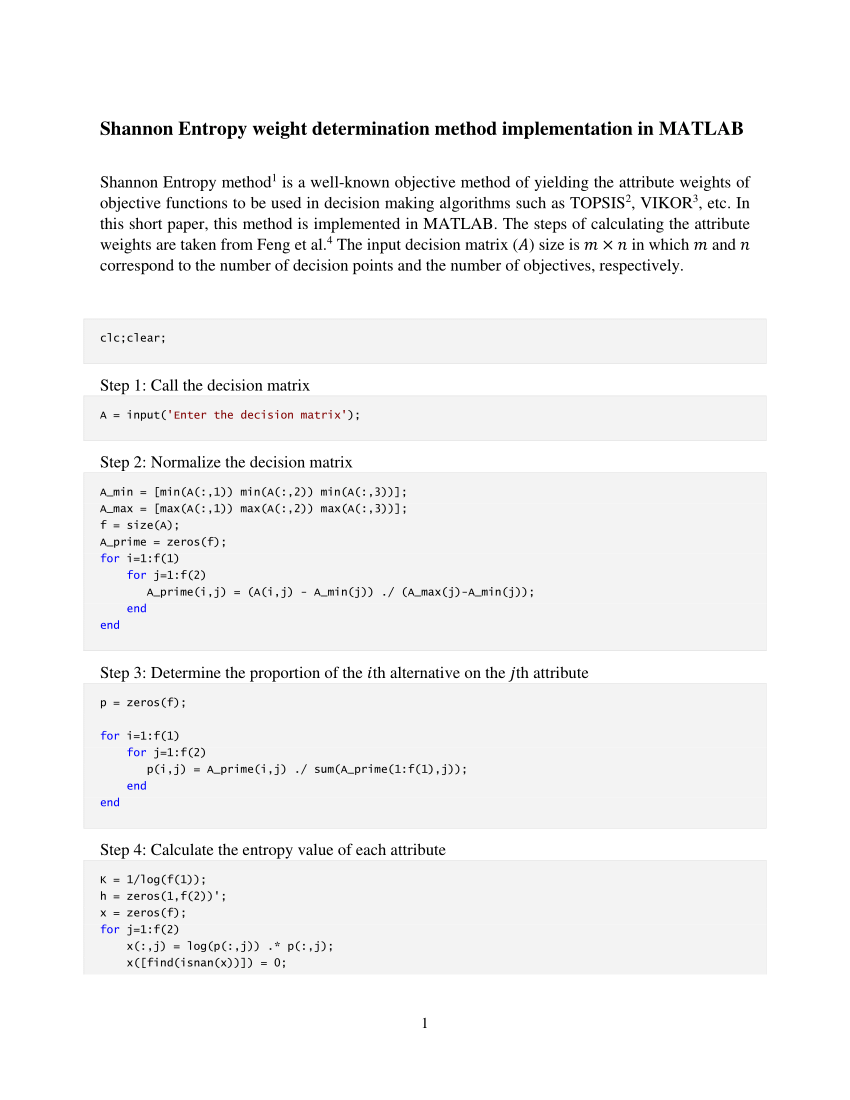

So, you can use this measure to understand the structure and predictability of your data. Remember, the higher the Shannon entropy, the more uncertain or random the data is. This is a powerful tool in your data science toolkit, allowing you to quantify the uncertainty or randomness in your data. ConclusionĪnd there you have it! You’ve successfully calculated the Shannon entropy of an array using Python’s NumPy library. This formula calculates the sum of the product of the probability of each element and the base-2 logarithm of the probability of each element, and then negates the result. Getting Started with NumPyīefore we dive into the calculation of Shannon entropy, let’s ensure that you have NumPy installed. It’s an essential tool for any data scientist working with Python. It provides a high-performance multidimensional array object and tools for working with these arrays. NumPy is a powerful Python library for numerical computations. The higher the entropy, the more uncertain or random the data is. It’s widely used in information theory to quantify information, uncertainty, or randomness.

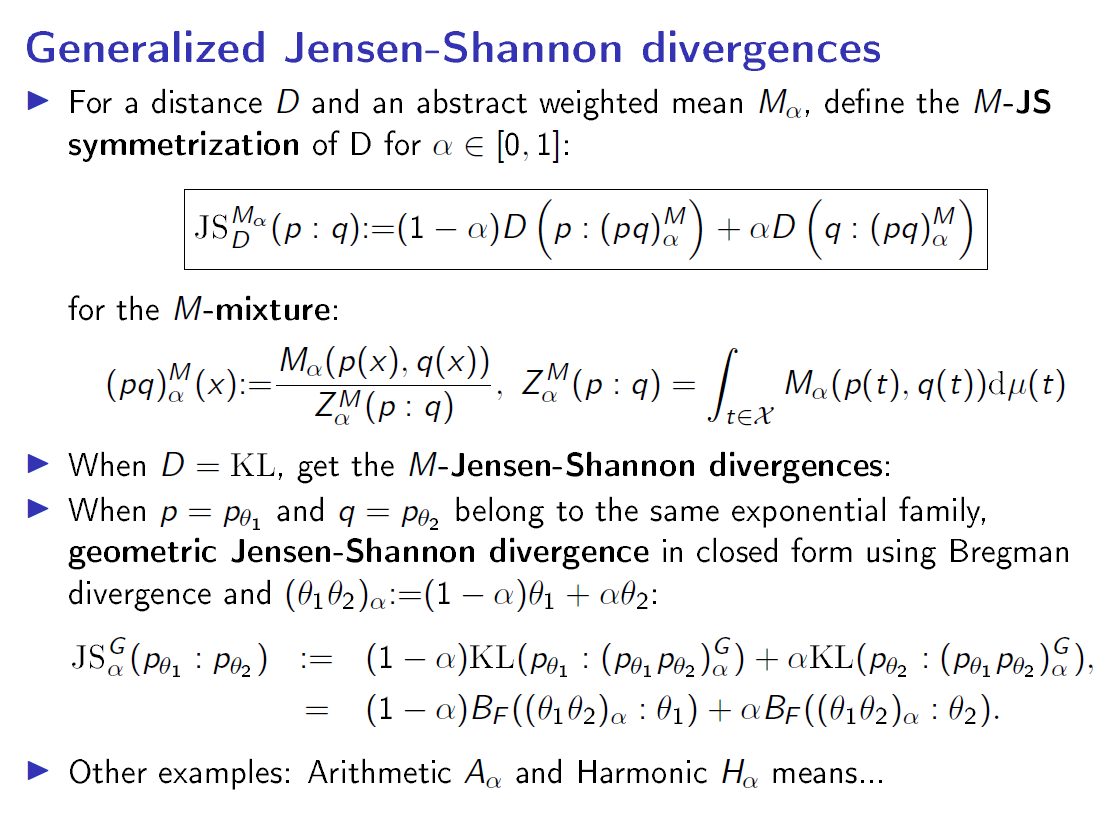

Shannon entropy, named after Claude Shannon, is a measure of the uncertainty or randomness in a set of data. In this blog post, we’ll guide you through the process of calculating the Shannon entropy of an array using Python’s NumPy library. One of the most common types of entropy used in data science is Shannon entropy. It’s a measure of uncertainty, randomness, or chaos in a set of data. In the world of data science, entropy is a crucial concept. You can find downloadable links of the books by searching google, the authors have put a draft version online for download (except "Digital Communications").| Miscellaneous Calculating Shannon Entropy of an Array Using Python’s NumPy There is also book on channel coding, source coding and of course, quantum information theory if you want to read more application of the subject and generalization to quantum bits. There is also the Holy Bible of digital communication, "Digital Communications" by John G Proakis and Masoud Salehiīut to be honest I don't think you need to study this one, Goldsmith's book is enough. Furthermore, understanding linear time invariant systems (LTI) and linear time varying systems (LTV) and general signal processing is a huge help. Of course I assumed you are already familiar with Random Processes and Random Variables which is necessary to study any of the above books (not in the sense of measure theory and analysis, just knowing the concepts is enough). The weighted quasiariihrnetic mean (3) is (a) additive and(c) small for small probabilities if and only if it is either the Shannon's entropy (1) or a Rnyi entropy (2) of positive (but 1)order. This one gives a good basic understanding of the problem setup you want to study from an engineering point of view (which is more calculating and less abstract math but it is a nice introduction). The Rnyi entropies of positive order (including the Shannon entropy as of order 1) have the following characterization (3, see also 4).Theorem 3. If you want to understand the basic communication theory needed, you can study the "Wireless Communications" by Andrea Goldsmith

For that I recommend "Network Information Theory" by Abbas El Gamal After those you can go even further by studying Network Information Theory, which is information theory applied to scenarios involving multiple receivers or transmitters and there is still many open problems there (which is left unsolved due to difficulty on finding a coding scheme that characterizes the whole capacity region). The second one is an old book but delves more into the mathematics of subject (I studied this one partially and it's much harder to understand).

Information Theory and Reliable communication "Information Theory and Reliable communication" by Robert G. After this if you want to delve deeper into theory, you can read I recommend this book, it's for elementary level and begging to the subject and I started with it myself, such an intuitive and beautifully written text, totally recommend it. If you want to start on the subject, a light book with tons of problems is "Elements of Information Theory" by Thomas Cover.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed